If your brand isn't being cited in AI search responses, you're invisible to a growing share of your highest-intent buyers - and your current analytics stack almost certainly isn't telling you that.

AI citation tracking is the discipline of systematically monitoring when, where, and how often AI platforms like ChatGPT, Perplexity, Claude, and Google AI Overviews reference your brand, content, or URLs in their generated answers. It's not a niche technical concern. By mid-2025, AI search platforms commanded 8.2% of total search traffic and were growing 150% year-over-year. More critically, they convert at 4 to 5 times the rate of traditional Google organic traffic. That combination makes AI citations one of the highest-leverage variables in modern demand generation - and one of the least-measured.

This guide covers the full picture: what AI citation tracking actually involves, which metrics matter, how to implement monitoring across platforms, and how to use the data to improve your citation share before your competitors solidify theirs.

Key takeaways

- AI citation tracking monitors your brand's presence in AI-generated answers across ChatGPT, Perplexity, Claude, Google AI Overviews, and Bing Copilot.

- Traditional SEO tools are structurally blind to citation visibility. Only 12% of ChatGPT's cited sources overlap with Google's top-10 results.

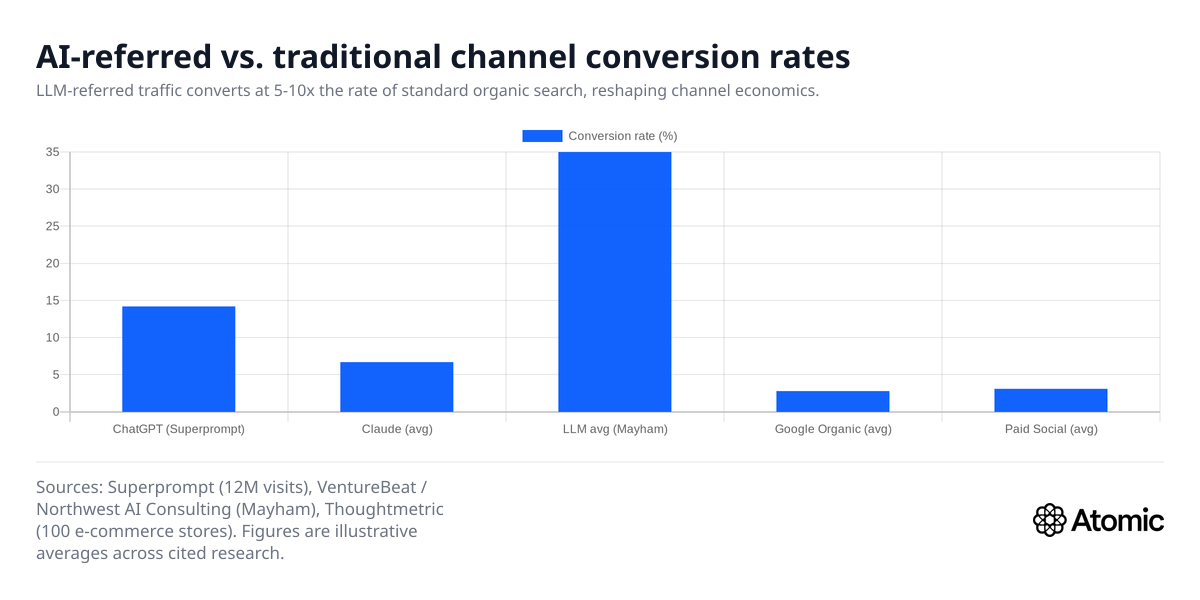

- AI-referred traffic converts at 14 to 40% depending on platform and industry - far above the 2 to 3% typical of organic search.

- Citation sources on AI platforms churn 40 to 60% monthly, which means snapshots are nearly useless. You need continuous tracking.

- The 30% threshold - where LLM-referred traffic conversion rates cluster - reflects the fundamentally different buyer intent arriving through AI channels.

- ChatGPT does not have a native citation-tracking console for publishers, but you can monitor it through synthetic query testing, referral log analysis, and dedicated AI monitoring platforms.

- Purpose-built platforms like Atomic track citation share across 10 AI platforms in a single dashboard, connecting that data to traffic and conversion outcomes.

What we'll cover

- What AI citation tracking is and why it diverges from traditional SEO

- The citation mechanics of each major AI platform

- Core metrics: what to measure and why

- How to implement a citation tracking workflow

- The 30% conversion benchmark explained

- Can ChatGPT and other AI tools find citations for you?

- Choosing the right tools and infrastructure

- FAQ section addressing common questions

What AI citation tracking actually is

In traditional SEO, visibility means ranking in blue-link results. You measure impressions, clicks, and position through Google Search Console, and rank tracking tools handle the rest.

AI citation tracking measures something structurally different. When someone asks Perplexity "What's the best project management tool for a remote team?", the answer they get is a synthesized paragraph that may or may not name your brand - and may or may not include a clickable link to your site. Whether or not your brand appears, and how it's described, is the signal that citation tracking captures.

The disconnect from traditional measurement runs deep. Research analyzing ChatGPT's citation patterns found only 12% overlap with URLs appearing on Google's first page. The other 88% came from sources ranking outside the top 10 or not ranking at all. You could be number one on Google for a category keyword while being completely absent from AI responses on the same topic. Conversely, you could rank eighth on Google but appear in the majority of relevant ChatGPT answers.

This divergence means your current SEO stack is measuring a different contest from the one increasingly determining who your buyers discover first. If you're not sure how much of your brand's presence this gap is costing you, a structured AI search visibility monitoring program is the fastest way to establish a baseline.

Three things citation tracking measures

Brand mentions: Does your company name appear in AI-generated answers, even without a link? Claude mentions brands in 97.3% of its responses - the highest rate among major models - but includes no external links by default. That means brand mention tracking and URL citation tracking are two distinct measurements requiring different methodologies.

URL citations: Does the AI platform include a direct link to one of your pages as a source? Perplexity cites sources inline with numbered references, making this relatively measurable. ChatGPT's Sources UI is less consistent in attribution.

Sentiment and framing: How does the AI describe your brand when it does mention you? Being cited as "one option among many" versus "the leading solution for X use case" has meaningfully different commercial outcomes, even if both register as a positive mention.

Why citation sources diverge so sharply from Google rankings

The divergence isn't random. It reflects how AI platforms are trained and how they retrieve information.

AI models weight community-edited, authoritative reference content heavily. Reddit ranks as the most-cited domain on Perplexity and second most-cited on SearchGPT. Wikipedia dominates ChatGPT citations at 167% frequency in some categories - cited more than once per prompt on average. These platforms systematically prioritize the types of sources that traditional commercial SEO has historically underweighted. A comprehensive study by OtterlyAI analyzing over 1 million citations across ChatGPT, Perplexity, and Google AI Overviews found that community platforms capture 52.5% of citations versus 47.5% for brand domains - and that 73% of brand sites have technical barriers blocking AI crawler access entirely.

Citation volatility compounds the challenge. Studies tracking the same queries over time found 40 to 60% monthly churn in which sources get cited. A page cited in January may not appear in February, replaced by different sources addressing the same topic. Google's organic rankings shift, but rarely at that rate. This volatility is why weekly snapshots of AI visibility are insufficient - and why continuous monitoring that tracks trends rather than single-point measurements is the only reliable approach.

Platform preferences also diverge from each other. Bing Copilot cites from its own indexed content and surfaces citation metrics directly in Bing Webmaster Tools' AI Performance report - the most transparent of all major platforms for publishers. Google AI Overviews show 76% overlap with top-10 ranking pages, which sounds like alignment until you examine the details: the top-10 pages AI Overviews cite often differ from the top-10 for the same organic query. According to Semrush's AI Overviews study across 10M+ keywords, AI Overviews now trigger for roughly 16% of all queries and are expanding fast into commercial and transactional search intent — well beyond the purely informational queries where they started.

Platform-by-platform citation mechanics

Understanding how each platform handles citations determines what you can measure natively versus what requires external monitoring.

ChatGPT (OpenAI)

ChatGPT Search shows web answers with a Sources UI, but link attribution varies by query type. There is no native publisher console. Monitoring relies on three approaches: synthetic query testing (sending structured prompts and recording whether your brand appears), referral log analysis in GA4 filtering for chatgpt.com as the referral source, and purpose-built monitoring platforms that automate this at scale. ChatGPT commands approximately 78.2% of AI search market share as of mid-2025, making it the highest-priority platform to monitor despite the measurement complexity. According to Digiday's analysis of Conductor data, 87.4% of all AI referral traffic across major industries comes from ChatGPT alone.

Perplexity

Perplexity always cites sources inline with numbered references, making citation rate relatively measurable compared to other platforms. There is no publisher console, so monitoring requires synthetic query tracking and referral log analysis. Perplexity generates 780 million monthly queries, growing 239% year-over-year, and typically sources heavily from Reddit, academic publications, and established media.

Claude (Anthropic)

Claude enables citations by default when its web search tool is active, including URL, title, and snippet fields - making its outputs particularly parseable for synthetic monitoring workflows. Claude mentions brands in 97.3% of responses, the highest rate among major models. However, it includes no external links by default, meaning brand presence in Claude's responses translates to awareness rather than direct traffic.

Google AI Overviews and AI Mode

Google AI Overviews appear in approximately 13.14% of US desktop searches and are live in 100+ countries. Google states AI features are included in Search Console reporting, but AI experiences change click behavior in ways that don't map cleanly to position data. A Pew Research Center study found that users who saw an AI summary clicked a traditional search result in only 8% of visits, compared to 15% when no AI summary was present. Rank tracking platforms that capture AI Overviews as a SERP feature - most added this capability in 2024 to 2025 - provide the most practical monitoring for this surface. For a deeper look at how to gain inclusion in these answers, see our guide to Google AI Overviews SEO.

Bing Copilot

Bing Webmaster Tools includes an "AI Performance" report (currently in public preview) with explicit citation-focused metrics: total citations, cited pages, and grounding queries. This is the first major search-console-equivalent built specifically for AI answers. Since Meta AI routes to Bing for live web information, your Bing citation footprint also affects Meta AI visibility - giving this report double value.

The core metrics that actually matter

Running an AI citation tracking program without a defined metric set is how teams generate activity without generating insight. These are the measurements worth building your reporting around.

AI share of voice (AI SOV): What percentage of relevant AI prompts mention or cite your brand, compared to competitors? This is the primary strategic metric - the equivalent of organic share of voice in traditional SEO. Calculate it by running a representative prompt library across target queries and recording which brands appear and how often. Dedicated AI search competitor tracking tools surface this comparison automatically across platforms.

Citation rate by query cluster: Not all queries are equally valuable. A citation rate on purchase-intent queries ("best [category] tool for enterprise") carries different commercial weight than informational queries. Segment your prompt library by intent and measure citation rate separately within each segment.

Brand mention rate vs. URL citation rate: As noted above, these are distinct signals. Claude may mention your brand without linking to you. Google AI Overviews may link your content without naming your brand explicitly. Track both metrics separately to understand where you have awareness coverage versus traffic-driving coverage.

Sentiment framing: Is your brand described favorably, neutrally, or unfavorably? And in what context - category leader, niche tool, legacy option? Sentiment and framing analysis requires either manual review of synthetic query outputs or a monitoring platform with built-in NLP scoring.

AI referral conversion rate: Segment your GA4 data to isolate sessions from AI referral sources. These visitors convert at 14 to 40% depending on platform and vertical - dramatically above organic averages. Tracking this metric separately makes the business case for citation investment concrete and finance-friendly.

Citation source stability: Which of your pages are being consistently cited versus cited sporadically? Pages with stable, recurring citation patterns represent your strongest content assets for AI visibility. Volatile citation patterns signal that the content isn't fully satisfying the query intent that's driving citations.

The 30% conversion benchmark explained

One of the most-cited statistics in AI search economics is that LLM-referred traffic converts at 30 to 40% - far above what most channels produce. Understanding where this number comes from, and what it actually means, matters before you build business cases around it.

The 30 to 40% figure comes from practitioners tracking their own conversion data for traffic arriving from AI platform referrals. In a VentureBeat report from April 2026, Wyatt Mayham of Northwest AI Consulting shared that LLM-referred traffic to his firm converts at 30 to 40%, which he described as dramatically higher than SEO or paid social.

This aligns with related data points from other sources: Superprompt, tracking 12 million visits, measured ChatGPT-referred traffic converting at 14.2% versus Google's 2.8%. Thoughtmetric found AI-referred e-commerce traffic converting at 6.7% versus Google's 3.9%. Ahrefs reported 12.1% of AI-referred visitors became customers despite representing just 0.5% of total traffic.

A large-scale study by Microsoft Clarity, which analyzed over 1,200 publisher and news websites, found that LLM-referred visitors converted to sign-ups at 1.66% compared to just 0.15% from organic search — more than 11x the rate. Copilot-referred traffic showed the highest subscription conversion rate of any platform, at 17x the rate of direct traffic.

The reason for the conversion premium isn't mysterious. When an AI system recommends your brand by name in response to a direct question about your category, the user arriving at your site has already passed through a qualification layer - the AI's synthesis of their intent. They arrive informed, already having read a recommendation that included your brand. The intent signal is fundamentally different from someone clicking the third result in a list of blue links.

The practical implication for tracking: conversion rate from AI referrals should be a core KPI in your monitoring program, not an afterthought. A company receiving 1,000 monthly visitors from ChatGPT at 14.2% conversion generates 142 customers. Matching that output from Google requires 5,000 visitors at 2.8% conversion. The channel economics are compelling enough that any CFO should understand the math.

What AI citation tracking enables is connecting the citation activity that produces those visitors to the actual revenue outcomes - completing the attribution loop that makes AI search investment defensible in budget conversations.

How to implement AI citation tracking

The implementation approach depends on your team's technical capacity and the scale of monitoring you need. Here's a practical framework, from foundational to advanced.

Layer 1: First-party data capture (start here)

GA4 AI referral segmentation: Filter sessions by referral source to isolate traffic from chatgpt.com, perplexity.ai, claude.ai, and other AI platforms. This captures only direct click-through traffic, missing the larger share of AI-influenced visits that arrive later through branded search. Still, it's the fastest way to establish a baseline and quantify the conversion premium on what you can already measure.

Bing Webmaster Tools AI Performance report: If you haven't set this up, do it immediately. The report surfaces total citations, average cited pages, and grounding queries with minimal configuration effort. Because Meta AI routes to Bing for live web data, this data covers more surface area than Bing's search share alone would suggest.

Google Search Console query cohort analysis: Pull impression and CTR data for your highest-value informational and commercial keywords. Look for keywords with declining CTR despite stable rankings - a pattern consistent with AI Overview suppression of clicks even when your page appears in the answer. This zero-click dynamic is growing: as our analysis of zero-click search trends shows, brands need citation share as a compensating visibility signal when click volume declines.

Layer 2: Synthetic query monitoring

Build a prompt library covering your key product categories, use cases, and buyer questions. Structure prompts to mirror how real buyers phrase questions to AI systems: conversational, comparison-seeking, and recommendation-oriented.

Run these prompts weekly (or daily for high-priority competitive segments) across ChatGPT, Perplexity, and Claude. Record whether your brand appears, whether a URL is cited, how the brand is framed, and which competitors appear alongside or instead of you. The AI search prompt tracking use case within Atomic is designed specifically for this workflow, automating prompt execution and parsing at scale.

This is labor-intensive to do manually at scale. Most teams beyond the startup stage need tooling to automate prompt execution, response parsing, and trend reporting across a library of 50 to 200+ priority prompts.

Layer 3: Competitive share-of-voice tracking

Basic mention tracking answers "are we visible?" Competitive SOV answers "are we gaining or losing ground?" Extend your synthetic query monitoring to record all brands mentioned in each response, not just your own. Calculate your share of total brand mentions across your prompt library. Track this weekly and segment by query intent - you may have strong citation presence in informational queries while being absent from the commercial queries that drive pipeline.

To track AI search citations compared to competitors, check out this video:

Layer 4: Content attribution and optimization feedback

The final layer closes the loop between citation visibility and content performance. Tag your top-cited pages by content type and topic. Map grounding queries from Bing AI Performance to your content roadmap. Identify where citation clusters concentrate - these are the content formats and topic areas your target AI platforms are retrieving from most frequently - and use that signal to inform what to create and update next.

Pages that answer questions directly, use structured comparison tables, include FAQ blocks, and present data clearly tend to out-cite pages optimized purely for keyword density. According to the OtterlyAI citation study, reference-grade content with proper schema markup and chunked structure receives 3 to 5x more citations than comparable pages without it. The content signals that make AI platforms cite you are measurable, which means you can optimize for them with the same rigor you apply to technical SEO.

Choosing the right tools

The market for AI citation tracking and monitoring has expanded rapidly. Here's how to evaluate your options based on where your team is.

What integrated SEO platforms offer

Platforms like Semrush and Ahrefs have added AI monitoring capabilities to their existing suites. Semrush's AI Overview tracking and Ahrefs' Brand Radar add-on let you track AI visibility in the same dashboard where you already manage keyword rankings. The advantage is minimal switching costs and integrated workflow. The limitation is depth: these additions are features within a platform designed around traditional SEO, not purpose-built AI citation systems.

Ahrefs Brand Radar draws from 260 million+ real monthly prompts, which is a meaningful data advantage for prompt coverage - but the AI citation features remain secondary to the core ranking and backlink product.

What purpose-built platforms offer

Platforms built specifically for AI search visibility typically offer deeper citation analytics, automated prompt tracking, content optimization recommendations, and competitive SOV reporting. Profound, which raised $96 million at a $1 billion valuation in February 2026, is the most-funded example. CitePulse, CiteMetrix, and similar tools occupy the same space with different pricing and platform coverage. For a detailed side-by-side comparison of the field, see our breakdown of the best AI search tracking tools in 2026, as well as the top GEO tools built specifically for generative engine optimization.

The tradeoff is a new tool, a new workflow, and another vendor relationship.

What Atomic offers

Atomic is built as an AI-native platform from the ground up - not a traditional SEO tool with AI features bolted on. It tracks brand mentions, citations, and sentiment across 10 AI platforms including ChatGPT, Gemini, Claude, Perplexity, and DeepSeek. Google Search Console, GA4, AI Overviews, and AI Mode data all live in a single dashboard, which matters because connecting citation visibility to traffic and conversion outcomes requires that integration.

Atomic also includes a dedicated LLM technical audit that checks whether AI crawlers can actually access and parse your site - the eligibility layer that many teams skip but that determines whether sophisticated citation optimization efforts can have any effect. An AI platform can't cite content it can't access. As the OtterlyAI study found, 73% of sites are blocked from AI crawler access due to robots.txt configurations, CDN rules, or JavaScript rendering issues - making this audit a critical first step before any content optimization.

The AI Agents in Atomic automate monitoring tasks like keyword decay alerts, content refresh triggers, and search anomaly detection on a defined schedule - reducing the manual overhead that makes citation tracking prohibitive for lean teams.

Pricing starts at a free tier, with paid tiers from $20 per month, which makes establishing a monitoring baseline accessible before committing to a full program.

The decision framework

Choose an integrated add-on if AI citation monitoring extends your existing SEO program and you want to minimize tool sprawl. Choose a purpose-built platform if you're treating AI visibility as a distinct strategic initiative with dedicated budget, reporting, and optimization workflows. Either way, do not rely on GA4 and Search Console alone - they structurally cannot show you what's happening in AI responses, only the downstream traffic effects of what happened. For a broader view of how these monitoring tools fit into a full GEO analytics stack, see our guide to the best GEO analytics tools in 2026.

FAQ

Can I use AI to find citations?

Yes - with important nuance about what "using AI" actually means in this context.

There are two distinct use cases: using AI tools to find where your brand is being cited in AI search responses, and using AI to discover academic or web citations of your own content.

For tracking AI search citations, the most practical approach is using AI monitoring platforms - either purpose-built tools like Atomic, Profound, or CitePulse, or AI-monitoring add-ons within existing SEO platforms. These tools run structured prompts across AI platforms, parse responses for brand mentions and URLs, and surface citation patterns at scale.

You can also build lightweight manual workflows: run conversational queries related to your product category through ChatGPT, Perplexity, and Claude, then record whether your brand appears and how it's described. This works for initial audits but doesn't scale beyond a small prompt set without automation.

For academic or web citation tracking - discovering who has linked to or referenced your content across the web - tools like Ahrefs, Majestic, and Google Scholar remain the standard. These are distinct from AI citation tracking, even though the term "citation" appears in both contexts.

The short version: yes, AI-powered tools are the most practical way to track your AI citations. No manual approach is sufficient at any meaningful scale.

What is the 30% rule in AI?

The "30% rule" in AI search is a reference to the conversion rate benchmark observed for LLM-referred traffic. Multiple practitioners and researchers have documented LLM-referred traffic converting at 30 to 40% - far above the 2 to 3% typical of traditional organic search and the 3 to 6% typical of paid search.

The clearest articulation comes from Wyatt Mayham of Northwest AI Consulting, reported in VentureBeat in April 2026: "For his company, LLM-referred traffic is converting at 30 to 40%, which blows away what we see from SEO or paid social." Related research from Superprompt (tracking 12 million visits) found ChatGPT-referred traffic converting at 14.2% versus Google's 2.8%, and Ahrefs reported 12.1% of AI-referred visitors becoming customers. The Microsoft Clarity study corroborates this directionally, finding that AI-referred traffic converted at 3x the rate of traditional channels across 1,200+ publisher sites.

The underlying reason is buyer intent quality. When a user has a conversation with an AI system and receives a specific brand recommendation, they arrive at that brand's site pre-qualified and pre-informed. The AI has already synthesized their need, assessed options, and named a recommendation. The user is not browsing - they're verifying. That intent structure produces fundamentally higher conversion rates than keyword-driven traffic.

The practical implication: AI citation tracking programs should report conversion rate from AI referrals as a primary business metric, not just citation frequency. The economics are what justify the investment to leadership.

Can ChatGPT find citations?

ChatGPT can surface citations in two distinct ways, and the distinction matters for how you approach both tracking and optimization.

First, when ChatGPT Search is active, ChatGPT retrieves live web content and displays a Sources panel with links to the pages it drew from in generating its answer. These are functional citations that drive direct referral traffic to cited pages. ChatGPT commands roughly 78.2% of AI search market share, making this citation surface highly valuable - but there is no native publisher console for tracking whether your URLs appear. Monitoring requires synthetic query testing combined with GA4 referral source filtering for chatgpt.com. For teams serious about this surface, the best ChatGPT tracking tools automate this process at scale.

Second, within its base knowledge (without web search), ChatGPT may reference your brand from training data without linking to any source. This is not a trackable citation in the traffic sense, but it does reflect how AI systems have learned to categorize your brand within its model - relevant for brand narrative and entity recognition, not traffic.

For SEO and marketing teams, the priority is the ChatGPT Search citation surface. Testing representative purchase-intent queries through ChatGPT with web search enabled is the standard method for auditing your citation presence. Dedicated monitoring tools automate this across a larger prompt set and track citation patterns over time.

ChatGPT cannot replace a dedicated AI citation tracking system - it has no analytics interface for publishers, and its citation behavior varies with each query. But testing your own citation presence through ChatGPT is a useful starting point before implementing a more systematic monitoring program.

Is there an AI for citations?

Yes - an entire category of tools has emerged specifically for AI citation tracking, and the market is growing rapidly. By mid-2025, researchers identified more than 35 tools focused on AI search visibility, spanning categories called Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO).

Atomic is one such platform, built to track brand mentions, citations, and sentiment across 10 AI platforms in a unified dashboard alongside traditional SEO analytics. Other dedicated tools include Profound (purpose-built enterprise AI monitoring), CitePulse, CiteMetrix, and CiteReach.

Major SEO platforms have also added AI citation capabilities. Semrush includes AI Overview tracking. Ahrefs launched Brand Radar, which draws from 260 million real monthly prompts and covers YouTube, TikTok, and Reddit alongside AI platforms. Writesonic's GEO suite covers the full loop from finding citation gaps to creating content to tracking whether it worked.

The category is early, which means tool capabilities and pricing are still evolving. The platforms that distinguish themselves are those connecting citation data to revenue outcomes - not just reporting that you were mentioned, but attributing those mentions to traffic, conversions, and pipeline.

For teams starting from zero, a practical first step is Bing Webmaster Tools' AI Performance report (free, first-party, and immediate to set up) combined with GA4 AI referral segmentation and a basic synthetic query audit through a dedicated AI monitoring platform. That combination surfaces enough data to make the business case for a more systematic program. The AI search visibility tracking software page details exactly how this data flows in a unified monitoring environment.

Why most citation tracking programs fail

Most teams that start citation tracking either run one audit and move on, or they start tracking the wrong metrics and can't connect the data to decisions.

The audit-and-forget failure mode happens because AI citation patterns are volatile. A page cited strongly one month may lose that position the next as AI platforms update their retrieval behavior or new content enters the consideration set. A single snapshot tells you where you were, not where you're heading.

The wrong-metrics failure mode typically manifests as tracking only brand mention frequency without context. A high citation count in informational queries that don't produce qualified traffic is not the same as strong citation coverage on purchase-intent queries that drive pipeline. The two need to be measured and reported separately, and the latter needs to be connected to conversion data.

The teams that extract genuine value from AI citation tracking treat it as a continuous operational function - weekly synthetic query testing, monthly SOV reporting against competitors, quarterly content audits aligned to citation gaps, and ongoing attribution tracking in GA4. This is the same rigor that successful organic SEO programs apply to rank tracking and content performance, applied to a newer and currently undermonitored surface. A structured approach to why AI search monitoring tools matter and how to operationalize them is a useful companion resource for teams building this function from scratch.

The window to establish citation presence while patterns are still fluid is approximately 12 to 18 months. As AI search grows from its current 8.2% traffic share toward a projected 25% by late 2027, the brands appearing in 40% of relevant AI responses will have built a compounding advantage that becomes significantly harder to dislodge.

Conclusion

AI citation tracking is not a future-state consideration. It's an operational requirement for any marketing or SEO team managing a website with meaningful organic traffic in 2026.

The economics are clear: AI-referred traffic converts at rates that can reach 30 to 40%, far above what any other channel reliably produces. The measurement gap is equally clear: your current analytics stack cannot show you your citation presence, competitive share of voice, or which content is driving AI visibility across the platforms that matter.

Building a citation tracking program requires layering first-party data capture from GA4 and Bing Webmaster Tools with synthetic query monitoring and competitive SOV measurement. The complexity scales with the size of your prompt library and the number of platforms you need to monitor. For teams beyond the initial audit stage, purpose-built monitoring infrastructure - either integrated within an existing SEO platform or through a dedicated tool like Atomic - becomes the practical path to maintaining continuous visibility.

The companies that win in AI search aren't the ones that wait to see how the channel matures. They're the ones already tracking which prompts drive qualified traffic, which competitors are capturing the citations they're missing, and which content updates are moving their citation share in the right direction.

Start with the free-to-access data sources. Build your synthetic query library. Connect citation visibility to conversion outcomes. Then scale the program as the data shows you where the opportunity is concentrated.