By mid-2025, AI search platforms (ChatGPT, Perplexity, Google AI Overviews) commanded 8.2% of total search traffic and were growing 150% year-over-year. More importantly, they converted at 4–5× the rate of traditional Google search. When ChatGPT visitors became customers 14.2% of the time versus Google's 2.8%, the channel economics flipped. Less traffic, better customers, higher revenue.

But companies had no way to measure it. Google Analytics couldn't distinguish AI traffic from organic. Search Console didn't track citations. 80% of AI citations came from sources that never appeared in Google's top 10. Traditional SEO tools measured rankings and traffic, neither of which predicted whether ChatGPT would recommend your product when someone asked.

In February 2026, Profound secured a $96 million Series C at a $1 billion valuation, led by Lightspeed Venture Partners and backed by Sequoia Capital and Kleiner Perkins. The message was clear: when AI search begins driving qualified traffic at scale, and legacy analytics can’t measure it, monitoring shifts from optional tooling to core marketing infrastructure.

In this article, we will explain why you should use AI Search monitoring tools in 2026 and how they can help you grow your business.

Why Use AI Search Monitoring Tools

The short answer: because you are almost certainly losing high-intent customers to competitors right now and have no way to know it.

The longer answer has five parts.

1. Your existing tools are blind to where discovery is actually happening.

Google Analytics, Search Console, and every traditional SEO platform were built for a world where discovery meant a user typing a query and clicking a blue link. That world still exists, but it's no longer the whole picture. When someone asks ChatGPT, "What's the best project management tool for a remote team?" and it recommends your competitor, that moment of influence is completely invisible in your current stack. No impression, no click, no session, nothing. You only see the downstream effect weeks later when branded search ticks up, and you attribute it to a campaign.

2. AI search traffic is your highest-converting channel, and you're not managing it.

The data is unambiguous on this. AI-referred visitors convert at 4–5× the rate of standard Google traffic. They spend more time on site, view more pages, and arrive already informed. If a channel were converting at that rate in paid search, you'd have a dedicated budget, a dedicated team, and weekly reporting. Right now, most companies have none of those things for AI search because they can't see it well enough to manage it.

3. Citation slots are finite and filling up fast.

When ChatGPT answers a question about your product category, it typically cites 5–7 sources. Those slots are not distributed randomly; they go to the brands that have established credibility through the signals AI platforms recognize: authoritative content, frequent citation by trusted third-party sources, presence on platforms like Reddit and Wikipedia that AI models weight heavily.

Once your competitor occupies a citation slot for a high-value query, displacing them requires dramatically superior content, not just marginally better. The window to claim these positions while they're still contested is closing.

4. You can't optimize what you can't measure.

Right now, most companies make content decisions based on keyword rankings and organic traffic, neither of which has meaningful correlation to AI citation. You could be publishing exactly the right content and ranking #1 on Google while being completely absent from AI responses for the same queries. Or you could be ranking #8 but getting cited in every relevant ChatGPT answer.

Without monitoring, you have no feedback loop. You're flying blind on a channel that is growing 150% year-over-year.

5. The cost of waiting compounds.

Every month you're not monitoring is a month competitors are building citation presence, accumulating brand mentions in AI training data, and establishing the credibility signals that make them the default recommendation.

The rest of this guide covers what these tools actually track, which ones are worth using, and how to implement monitoring in a way that connects to revenue rather than vanity metrics.

The Conversion Quality Paradox

AI search traffic doesn't convert marginally better than Google. It converts dramatically better, and the engagement patterns explain why.

ThoughtMetric analyzed 100 e-commerce stores and found ChatGPT traffic converted at 6.7% compared to Google's 3.9%. Superprompt, tracking 12 million visits, measured AI search converting at 14.2% versus Google's 2.8%, a fivefold advantage. Ahrefs reported the most extreme differential: 12.1% of AI-referred visitors became customers despite representing just 0.5% of total traffic, a 23× efficiency gain over their overall conversion rate.

The engagement patterns tell the story. ChatGPT visitors average 2.3 pages per session versus Google's 1.2. Session duration runs 5:18 for ChatGPT compared to 1:24 for traditional search. Claude users stay 6:42. These aren't people clicking the first result and bouncing; they're arriving with intent, already educated by the AI conversation that led them there.

The economic implications reshape channel strategy. At the current scale, AI search represents less than 1% of total web traffic. But when that fractional traffic converts at 5× the rate, the revenue contribution approaches channels commanding 10× the volume. A company receiving 1,000 monthly visitors from ChatGPT at 14.2% conversion generates 142 customers. The same company needs 5,000 Google visitors at 2.8% conversion to match that output.

Multiple analysts project the crossover point, where AI search drives equal revenue despite lower traffic, happening between late 2027 and early 2028, when AI search reaches approximately 25% market share. The channel that seems small today becomes strategically essential tomorrow.

The Dark Funnel Problem

There's a compounding issue that makes AI search even harder to measure than it already appears. Someone asks Perplexity for a product recommendation. It cites your brand. The person doesn't click through immediately. Instead, they search your company name on Google three days later and convert. Your analytics show a branded search conversion. The Perplexity citation that generated awareness never appears in your data.

This dark funnel effect means AI search impact consistently understates itself in traditional measurement. Companies see branded search increasing without understanding the source. They might attribute it to word-of-mouth or recent campaigns. Meanwhile, AI platforms are systematically introducing prospects to their brand, citations accumulating as untracked brand-building.

Why Traditional Metrics Fail

AI search platforms use fundamentally different sources than Google, making traditional search rankings irrelevant for predicting AI visibility.

Research analyzing ChatGPT's citation patterns found only 12% overlap with URLs appearing on Google's first page. The other 88% came from sources ranking outside the top 10 or not ranking at all. This isn't noise; it's systematic divergence. When someone asks ChatGPT for project management software recommendations, the sources it cites rarely match what ranks first for "project management software" in Google.

The citation volatility compounds the challenge. Studies tracking the same queries over time documented 40–60% monthly churn in which sources get cited. A page cited in January might not appear in February, replaced by different sources answering the same question. Google's rankings shift, but not at that rate.

Platform preferences also differ dramatically. Reddit ranks #1 on Perplexity for citation frequency, #2 on SearchGPT, and #3 on Google AI Mode. Wikipedia dominates ChatGPT citations at 167% frequency in some categories, cited more than once per prompt on average.

These platforms prioritize community-edited content and authoritative references over commercial websites, even when those commercial sites rank first in traditional search.

Ahrefs' research on AI Overview citations found 76% came from top-10 ranking pages, which sounds like alignment until you examine the details. The top 10 that AI Overviews cite often differ from the top 10 in organic results for the same query. You might rank #1 for a keyword but not appear in the AI Overview, or rank #8 yet get cited as the primary source.

Researchers describe the correlation as "a coin flip at best" for predicting citations from ranking position.

What Google Analytics and Search Console Can't Tell You

Google Search Console doesn't reveal which keywords trigger AI Overviews. The data simply doesn't exist in the interface. Google Analytics 4 blends AI Overview traffic into standard organic search with no way to segment it. When researchers tried to estimate AI Overview's impact by filtering for keywords showing click-through rate drops without ranking changes, they found a 34.5% decrease in clicks, but this methodology requires guesswork rather than direct data.

A March 2025 Pew Research study found that when an AI summary appeared in Google results, users clicked a source link only about 1% of the time, and sessions ended more often when an AI summary was present (26% vs 16%). Similarweb reports the median zero-click rate rises to ~80% when AI Overviews are present, versus ~60% without them.

This is the measurement gap the market responded to.

The Tool Category That Emerged

By August 2025, researchers had identified over 35 tools focused on AI search visibility, spanning categories called Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO). The naming fragmentation signals early-stage market formation; there is no consensus yet on what to call this category.

Every major SEO platform also launched AI monitoring capabilities between 2024 and 2025, validating the category from the opposite direction. When incumbents with established revenue streams invest in new infrastructure, it signals a market shift rather than a speculative opportunity.

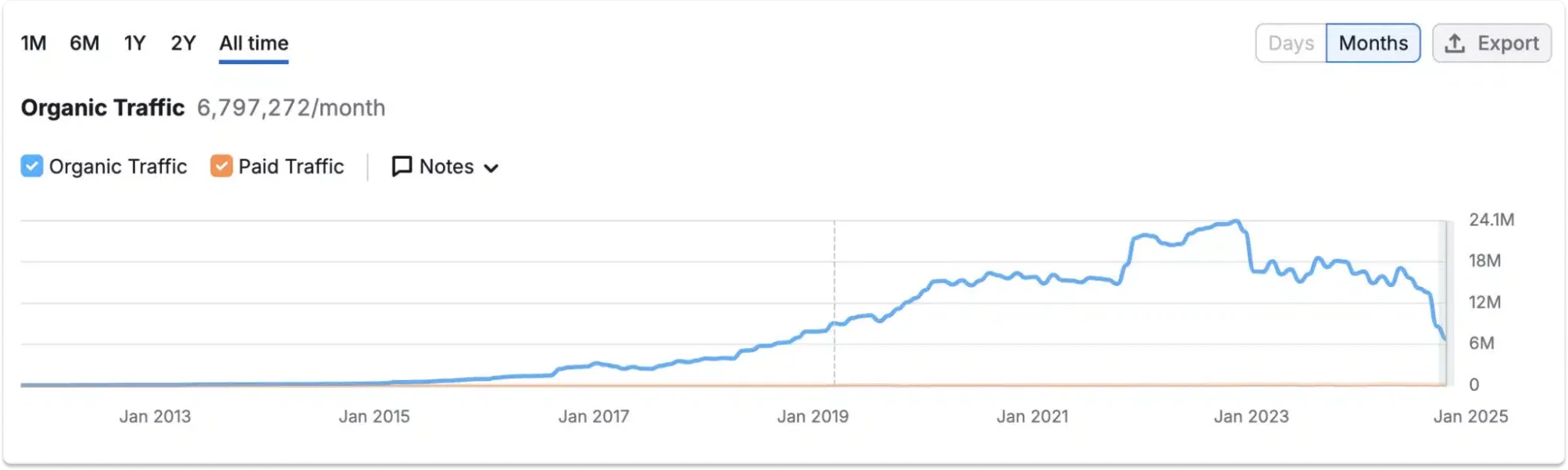

The HubSpot Case Study

HubSpot's traffic collapse became the clearest illustration of why AI monitoring matters. Organic visits dropped from a March 2023 peak of 24.4 million monthly visits to 6.1 million by early 2025, depending on measurement methodology. SEMrush and Ahrefs both showed year-over-year declines of approximately 81%.

But revenue didn't follow traffic down. The company's stock has delivered 2,200% returns since its IPO. HubSpot now reports just 10% of leads originate from blog traffic, down from what was once the majority of their pipeline.

Their response was telling: they pivoted toward AI citations, claiming to be "cited in LLMs more than any other CRM." Internal data showed traffic from large language models increasing while traditional search traffic declined. Without AI monitoring, you'd look at collapsing traffic numbers and conclude strategic failure. With monitoring, you might recognize successful adaptation to a distribution shift.

The lesson extends to any company in this position. When discovery migrates from blue links to conversational AI, measurement must migrate too.

Market Examples: 3 Tools That Can Help You Win in AI Search

The market in 2026 splits into three overlapping layers: all-in-one SEO suites that added AI visibility features, purpose-built AI monitoring platforms, and high-frequency SERP data providers. Here are some examples:

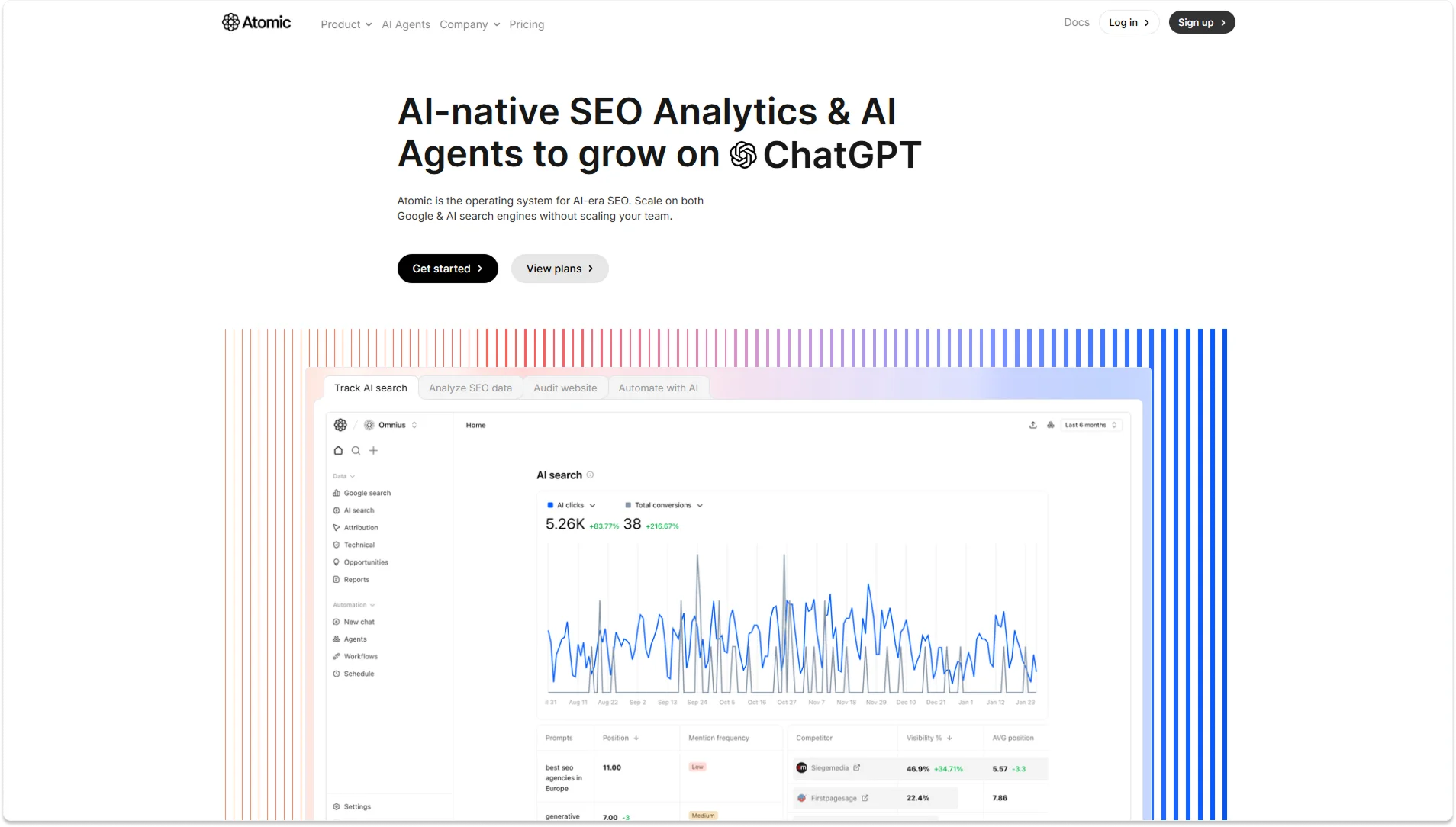

1. Atomic

An AI-native platform that combines Google SEO analytics, AI search tracking, technical auditing, and workflow automation in one place; built for the AI search from the ground up rather than adapted from a traditional SEO tool.

- Tracks brand mentions, citations, and sentiment across 10 AI platforms, including ChatGPT, Gemini, Claude, Perplexity, and DeepSeek

- Google Search Console, GA4, AI Overviews, and AI Mode data all in one dashboard

- Dedicated LLM technical audit checking whether AI crawlers can access and parse your site

- AI Agents that automate tasks like content refreshment, keyword decay monitoring, and search alerts on a schedule

Pricing: Free tier available. Starter $20/month, Agentic (includes automation) $40/month, Team $100/month.

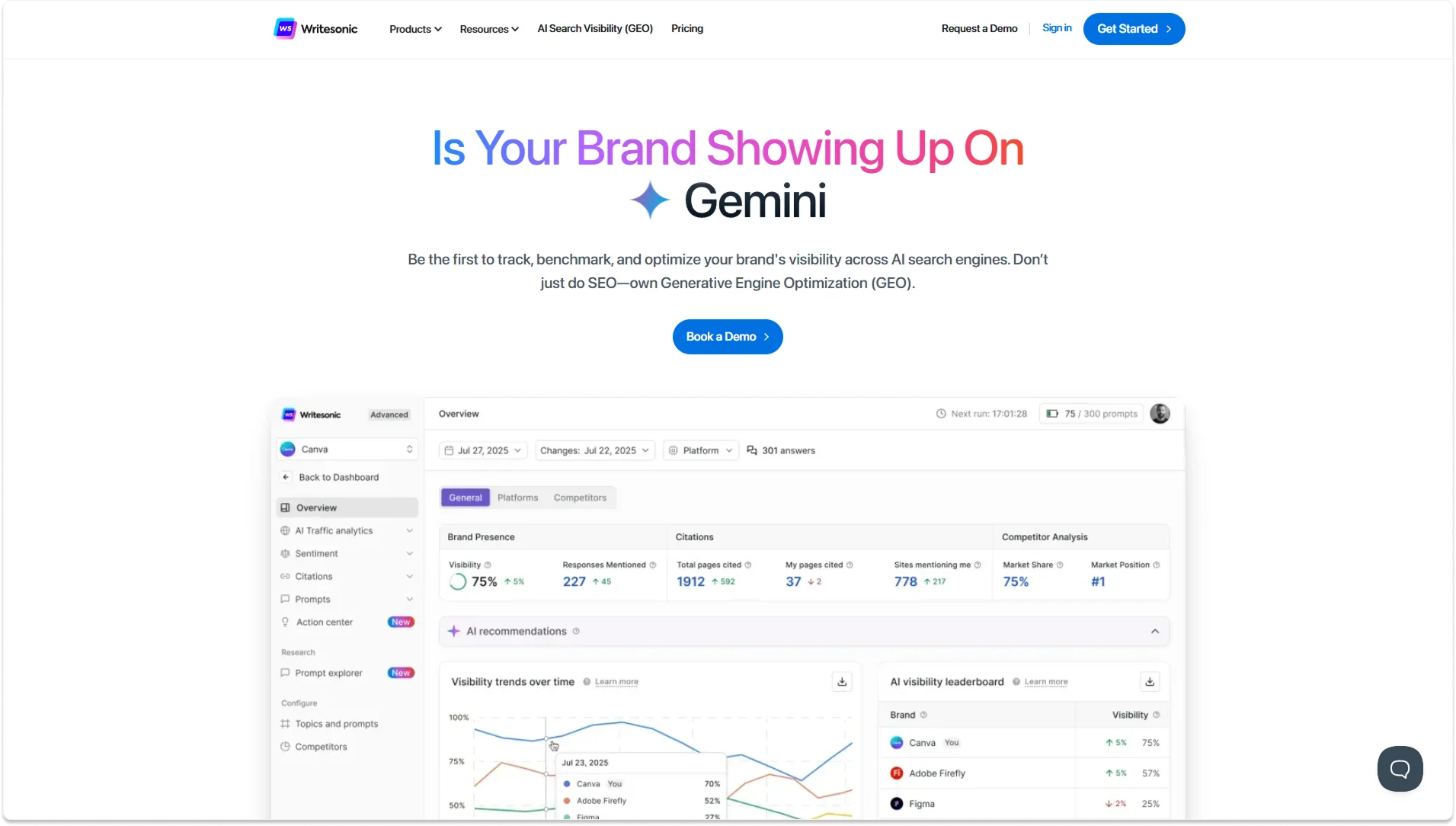

2. Writesonic GEO

A GEO (Generative Engine Optimization) suite built on top of an existing AI content platform, covering the full loop from finding where your brand is absent in AI answers to creating content to fix it and tracking whether it worked.

- Brand Presence Explorer tracks mention rate, share of voice, and sentiment across major AI platforms vs. competitors

- AI Traffic Analytics shows which pages AI crawlers are actively visiting — a direct signal of what's being considered for citation

- Citation tracking maps, which third-party sources AI platforms reference when mentioning your brand

- Built-in content writing and SEO optimization tools so you can act on findings without switching platforms

Pricing: GEO features from $249/month

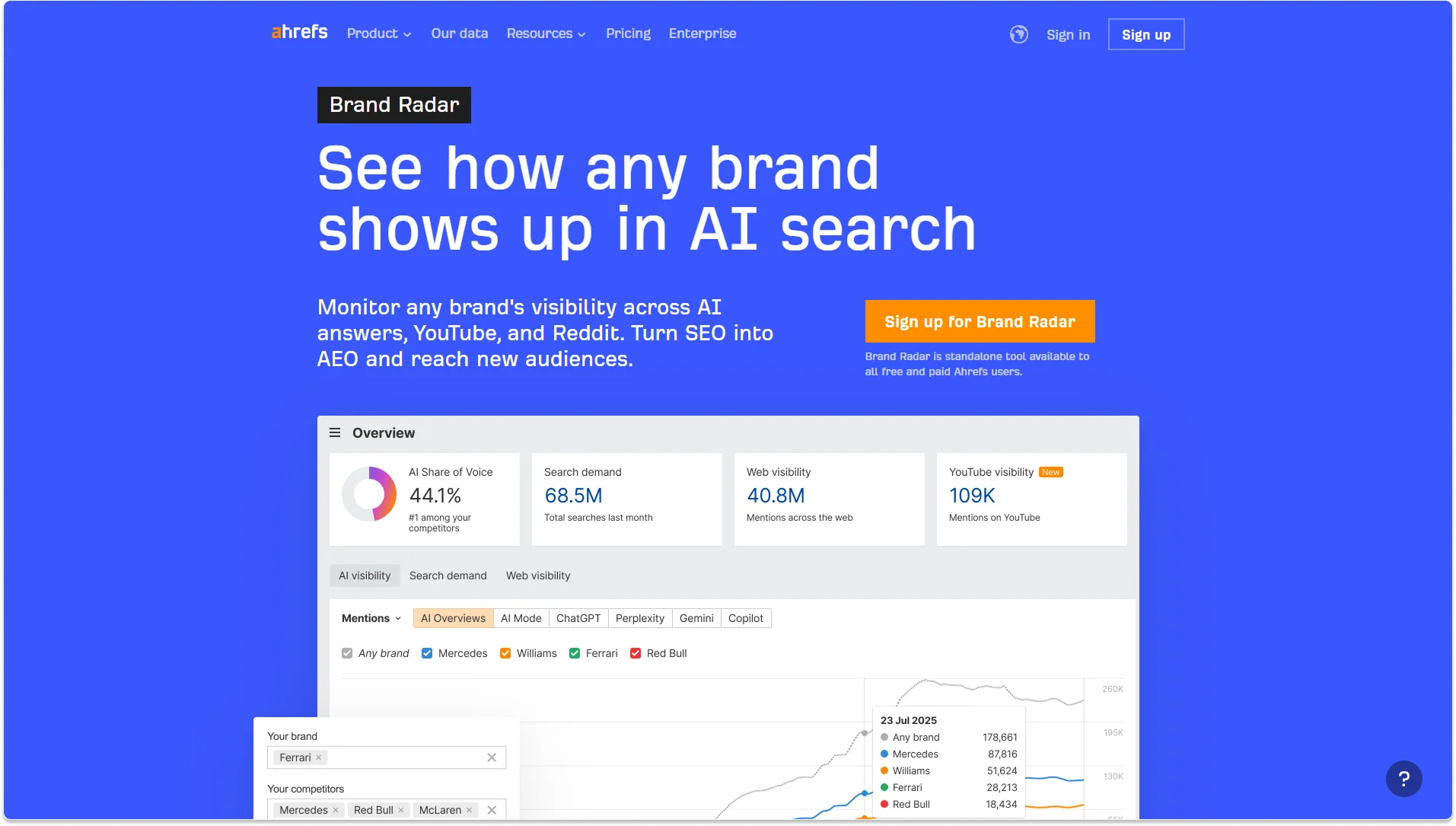

3. Ahrefs Brand Radar

Ahrefs' AI visibility add-on, distinguished by its data scale: 260 million+ real monthly prompts from actual search behavior rather than synthetic queries. Goes beyond AI platforms to also track brand mentions on YouTube, TikTok, and Reddit.

- Monitors visibility across ChatGPT, Gemini, Perplexity, Copilot, and Google's AI surfaces

- Custom prompt tracking for specific bottom-of-funnel queries you define

- Cross-platform brand mention tracking across YouTube, TikTok, and Reddit

- Integrates directly with Ahrefs' existing SEO dataset in the same interface

Pricing: Add-on to a base Ahrefs plan ($129/month+). Individual AI indexes at $199/month per platform, or $699/month for all 6 bundled.

Key Capabilities That Matter in 2026

An AI search monitoring tool in 2026 is a system that continuously collects visibility signals and uses AI/ML to interpret them, detect meaningful change, and trigger actions across multiple discovery surfaces. "Search" now encompasses four distinct layers: classic SEO performance telemetry (impressions, clicks, CTR from Search Console), SERP layout and feature tracking (AI Overviews, featured snippets, video packs), AI visibility or GEO/AEO (whether AI answers mention your brand and how stable that is), and operational monitoring (always-on technical detection with real-time alerting).

The capabilities that actually matter, and what to use as success metrics:

- Query intent detection classifies each query as informational, navigational, commercial, or transactional, and sets realistic CTR expectations based on the SERP layout that will appear. A page ranking #1 for an informational query dominated by AI Overviews will have a fundamentally different CTR ceiling than a commercial query with traditional results. Success metric: CTR lift versus expected CTR for the intent bucket, not raw rank.

- SERP feature tracking detects which modules appear and whether you "own" them, AI Overviews presence, featured snippets, local packs, and ideally tracks your position within those features. Success metric: share of feature ownership on high-intent query sets.

- Anomaly detection flags statistically unusual changes: sudden drops in impressions, AI Overview appearance spikes, indexing, or canonical anomalies. The value is catching things fast. Success metrics: time-to-detect and false-positive rate.

- Competitor monitoring benchmarks your visibility versus competitors and identifies fast-climbing new entrants in your citation space. Success metric: percentage of priority topics where you maintain a top-3 share of voice.

- Content gap analysis finds topics competitors are cited for where you're absent, extending to AI content gaps and prompt clusters, not just keyword gaps. Success metric: percentage of shipped pages hitting target impressions within a defined window.

- Historical trending tracks citation patterns over time. Given that AI platforms churn 40–60% of cited sources monthly, trends are significantly more valuable than snapshots. A tool that only shows current-state monitoring is essentially blind to whether you're improving or declining.

- Real-time alerting routes role-based notifications into email, Slack, or ticketing systems for high-severity events. Without this, you find out about problems when someone notices the traffic drop in a monthly report. Success metric: mean-time-to-fix and prevented revenue loss.

How to Evaluate and Choose the Right Tool

Start with platform coverage

The three core AI search systems driving the majority of current traffic are ChatGPT (78.2% of AI search market share as of August 2025, 800 million weekly users), Google AI Overviews (appearing in 13.14% of US desktop searches, rolled out to 100+ countries with 1B+ monthly users), and Perplexity (780 million monthly queries, up 239% year-over-year). Monitoring these three captures the majority of current AI search activity.

Extended coverage adds Gemini, Microsoft Copilot, Claude, and Google AI Mode, smaller but growing user bases that often surface different sources. Comprehensive enterprise monitoring covers 10+ platforms. The practical approach: start with the three core platforms and expand as budget allows.

Integration versus standalone

SEMrush and Ahrefs offer AI monitoring as add-ons to existing SEO platforms. This minimizes switching costs for current customers, and you track AI citations in the same dashboard where you already work. Training time is minimal, and vendor relationships don't multiply.

Standalone tools like Profound build purpose-fit products optimized specifically for AI visibility. This typically delivers deeper functionality, automated content optimization, custom prompt tracking, and more sophisticated competitor analysis, but requires adopting a new tool, learning different workflows, and managing another vendor relationship.

The decision rule: choose integrated add-ons when AI monitoring extends existing SEO efforts. Choose standalone when treating AI visibility as a distinct strategic initiative deserving dedicated infrastructure.

Data depth separates basic from strategic

Entry-level tools track mentions and citations, whether your brand appears in AI responses and whether your URLs get linked. This answers "are we visible?" but not "compared to whom?" or "why?"

Intermediate capabilities add sentiment analysis, share of voice, and competitor benchmarking. Advanced features add content recommendations, automated optimization suggestions, and attribution tracking. The critical capability often overlooked: historical data. Citation patterns shift month-to-month, making trends far more valuable than snapshots.

Metrics to Track

Once monitoring is running, the following metrics form the core reporting layer:

- Query cluster visibility: weighted share of voice across priority keyword clusters; tracks competitive position beyond single keywords and aligns with topic ownership rather than individual rankings.

- AI Overview presence rate: percentage of tracked queries where AI Overviews appear; directly correlates with higher zero-click behavior and should inform how aggressively you pursue click-generating versus citation-generating content.

- Click opportunity index: impressions multiplied by expected CTR adjusted for SERP layout; prevents over-investing in queries where AI features have structurally reduced the click opportunity regardless of rank.

- AI share of voice: percentage of AI prompts or answers that mention or cite your brand; is the leading indicator for AI discovery surfaces and complements classic SEO share of voice.

- Conversion rate from AI referrals: tracked separately in GA4; keeps the entire program tied to business outcomes rather than visibility theater.

- Time-to-detect and time-to-fix: median time from issue start to alert to resolution; is the operational KPI that determines whether monitoring actually prevents revenue loss or just documents it.

Risks and Limitations to Understand

Measurement ambiguity persists. Google states AI features are included in Search Console reporting, but AI experiences change click behavior in ways that don't map cleanly to position data. Teams can misattribute performance shifts, blaming content quality when the SERP layout changes.

AI surface volatility is the norm. ~70% of pages ranking in AI Overviews change over 2–3 months (per Authoritas research). Monitoring must treat AI visibility as a probabilistic signal with confidence intervals, not a deterministic rank equivalent.

False positives create alert fatigue. Anomaly detection is powerful but error-prone. Calibrate thresholds carefully in the first 90 days. An alert system that fires too often trains teams to ignore it.

Anti-scraping constraints are growing. Platform countermeasures and litigation around scraping increase uncertainty for SERP data acquisition. Rely on first-party APIs and vendor platforms with clear data handling rather than scraping-based approaches where possible.

Privacy and compliance requirements apply. Any stack ingesting user-level analytics or storing query data across geographies must align with GDPR and other privacy regimes. Verify vendor data handling, DPA availability, and retention policies.

What to Expect Through 2028

Monitoring will expand from keywords to entities and narratives. AI answers compile information from multiple sources simultaneously, and tools are already evolving toward entity-level visibility across AI answers, social platforms, and video.

Higher-frequency data will become standard outside enterprise tiers. As AI Overview sources churn at the documented rates, weekly rank tracking will be insufficient for competitive verticals. Always-on monitoring will move to mid-market pricing within 18–24 months.

ROI measurement will become finance-native. Expect broader adoption of valuation frameworks that translate AI visibility into monetary proxies comparable to CPM, because stakeholders will demand comparability with paid media before approving expanded budgets. Similarweb has already published a framework (ABMV) for this translation.

Crawl governance becomes part of SEO operations. Cloudflare data shows AI and search crawler traffic increased 18% from May 2024 to May 2025, with GPTBot up 305%. Monitoring crawl behavior, server load, and content access rules is becoming a standard operational requirement for publishers and content-heavy businesses.

The Bottom Line

The moat moved from rankings to citations. Most companies are still measuring the wrong thing.

Search visibility used to mean one thing: ranking in Google's blue links. In 2026, it means being cited in conversational AI responses that produce customers at 5× the rate of any previous channel. The companies that win the next five years aren't necessarily those with the highest domain authority. They're the ones who understand the new rules fast enough to act while citation patterns are still fluid and competitive slots are still available.

The window for establishing citation presence before patterns harden is approximately 12–18 months. As AI search grows from 8.2% to a projected 25% market share by late 2027, the companies appearing in 40% of relevant AI responses versus competitors' 10% will have built a compounding advantage that becomes exponentially harder to dislodge.

The tools exist. The pricing is accessible. The data is there. The only missing ingredient is the organizational decision to treat AI search visibility as infrastructure rather than an experiment.

If you want to start tracking your AI search visibility without stitching together five different tools, Atomic is worth starting with; there's a free tier, so you can see the data before committing to anything.

FAQ

Do I need AI search monitoring if my Google traffic is still growing?

Yes. Google traffic and AI visibility are not mutually exclusive; in fact, they're correlated.

The content and authority signals that help you rank on Google are often the same ones that get you cited in AI answers. But AI platforms also pull heavily from Reddit, Wikipedia, and community sources that traditional SEO tools don't track. Starting monitoring now gives you 12–18 months to build citation presence before patterns harden and competition intensifies.

How long does it take to see results from AI search optimization?

Most companies see measurable changes in AI citation frequency within 8–12 weeks of implementing monitoring recommendations, updating high-value pages with structured data, refreshing content with recent statistics, and targeting content gaps where competitors dominate.

The timeline depends heavily on how much foundational content you already have and whether AI crawlers can currently access your site without technical barriers.

Can I track AI search performance in Google Analytics without a dedicated tool?

Partially. You can filter GA4 for referral sources like "chatgpt.com" or user agents like "ChatGPT-User" to see direct click-through traffic from AI platforms.

But this only captures people who clicked a link immediately; it misses the majority of AI search impact, which happens through brand awareness that converts days later as branded search. You also can't see citation frequency, share of voice versus competitors, sentiment, or which specific prompts trigger mentions. That requires a dedicated monitoring infrastructure.