AI-driven search is scaling fast. ChatGPT has over 800 million users weekly. Perplexity handles 780 million monthly queries. Google AI Overviews appear in 13% of all searches, and that number is growing.

Are the tools designed to measure visibility inside these systems? Most of them are SEO dashboards with a fresh coat of paint.

They replaced rank positions with "share of voice." They swapped backlink counts for citation counts. They added a column labeled "AI" and called it a new product category. The interface changed. The mental model didn't.

This guide breaks down what AI visibility tools actually need to have, and why the gap between what most tools measure and how AI systems actually work is bigger than vendors want to admit.

Why AI Search Is Not Just SEO With a New Label

Traditional SEO is a ranking problem. You create content, build authority, earn links, and climb positions on a results page. The game is deterministic enough that rank tracking makes sense.

AI search is a synthesis problem. Large language models don't rank pages; they generate answers. They select sources probabilistically, cite selectively based on intent and phrasing, and produce responses that vary with every query variation. There is no "position 1" to chase.

The citation gap is just as revealing. When SEMrush analyzed brand visibility across AI platforms, they found that only 6% to 27% of brands that appeared frequently in AI responses were also cited as authoritative sources. In fashion, just 3 brands on ChatGPT made both the "mentioned" and "cited" lists. Being talked about and being trusted operate on separate tracks, yet most tools still conflate the two metrics into a single "AI visibility score."

Scale justifies investment in measurement. But scale doesn't validate the approach.

8 Features That Every AI Visibility Tool Should Have

1. Multi-Engine Coverage, Not Single-Model Snapshots

AI visibility is not one channel. It's five or six different channels that happen to use similar interfaces.

ChatGPT, Perplexity, Gemini, Copilot, Claude, and DeepSeek use different retrieval pipelines, different freshness heuristics, different citation rules, and different source selection algorithms.

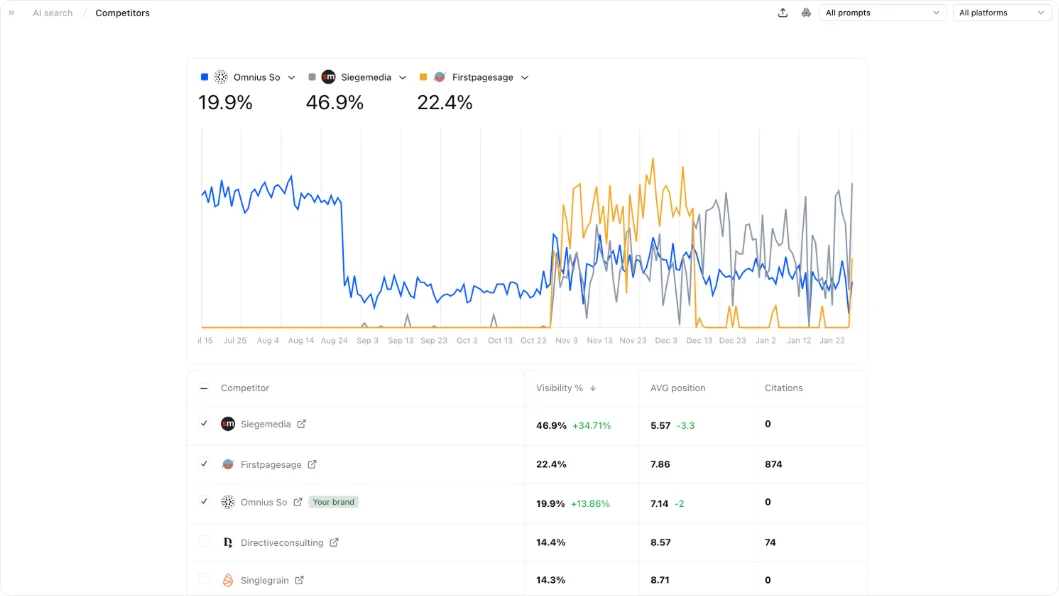

A brand dominating ChatGPT recommendations might be completely invisible in Gemini responses to identical queries. Optimization strategies can actively conflict across engines; what improves citation frequency in one system might reduce it in another.

The market data shows why this matters:

- ChatGPT commands 80.5% of the AI assistant market and captures 77.97% of AI referral traffic

- Perplexity holds just 7.9% market share but generates 15.10% of AI referral traffic, a signal of distinct user intent

- Conversion rates diverge sharply: Claude users convert at 5%, ChatGPT at 16%, Perplexity at 10.5%

Each platform attracts different audiences with different needs. A tool tracking only one engine creates a false sense of certainty. When AI visibility tools first launched in early 2024, many tracked only ChatGPT. Within twelve months, comprehensive platforms had standardized on monitoring six or more systems. The convergence happened because single-engine tracking proved insufficient.

What this means in practice: Effective multi-engine coverage isn't just parallel tracking. It demands understanding that each platform serves different use cases, updates retrieval behavior on different schedules, and applies different trust signals. If a tool shows uniform visibility across all engines, that's a red flag, not a good sign. The platforms diverge by design.

2. Prompt-Level and Intent-Level Tracking, Not Keyword Proxies

AI systems don't retrieve information based on keywords. They respond to intent expressed through prompts.

Someone researching "marketing attribution software" through ChatGPT might phrase it as:

- "Explain how marketing attribution works"

- "Compare multi-touch vs last-click attribution models"

- "Which attribution tool integrates with Salesforce and HubSpot"

Each variation triggers different retrieval paths, cites different sources, and serves a different stage of the decision journey. Keyword-style abstractions flatten this into false equivalence; "mentions for 'attribution software'" tells you nothing useful about where competitive displacement actually happens.

Intent categories matter more than search volume proxies. Research intent, comparison intent, evaluation intent, and decision intent each require different content strategies and produce different visibility patterns.

A brand that's invisible during research queries but prominent in decision-stage prompts has a fundamentally different problem than one mentioned broadly but never cited as authoritative.

This is where most tools fail completely. They track "mentions for 'project management software'" when what matters is whether the brand appears in "which project management tool has the best API" versus "how to choose project management software for remote teams." These are different reasoning paths and different competitive contexts. Tools that can't distinguish them measure broad exposure, not competitive positioning at decision moments.

3. Citation and Source Attribution Analysis

Being mentioned by an AI system is not the same as being cited.

Unattributed mentions appear in conversational responses but carry no explicit attribution signal. Citations signal that the content met thresholds for accuracy, relevance, and trustworthiness in that specific context. Mentions measure exposure. Citations measure influence. The strategic implications are completely different.

Earlier mentioned SEMrush research quantified this gap across verticals:

- Finance: 22–27 brands appeared in both "mentioned" and "cited" categories

- Fashion: just 3 brands on ChatGPT made both lists

- Overall: only 6–27% of the most-mentioned brands ranked as top-cited sources

Ahrefs added another layer: 76% of AI Overview citations come from pages already ranking in Google's top ten. The remaining 24% come from elsewhere, meaning content structure, expertise signals, and "quotability" influence citation selection independent of traditional SEO strength.

Any tool that reports "AI mentions" without separating citation types is measuring noise.

What a mature tool must track:

- Unattributed mentions

- Supporting citations (your content used for grounding but not explicitly linked)

- Primary source citations (explicit attribution with links)

- Which specific pages are being cited

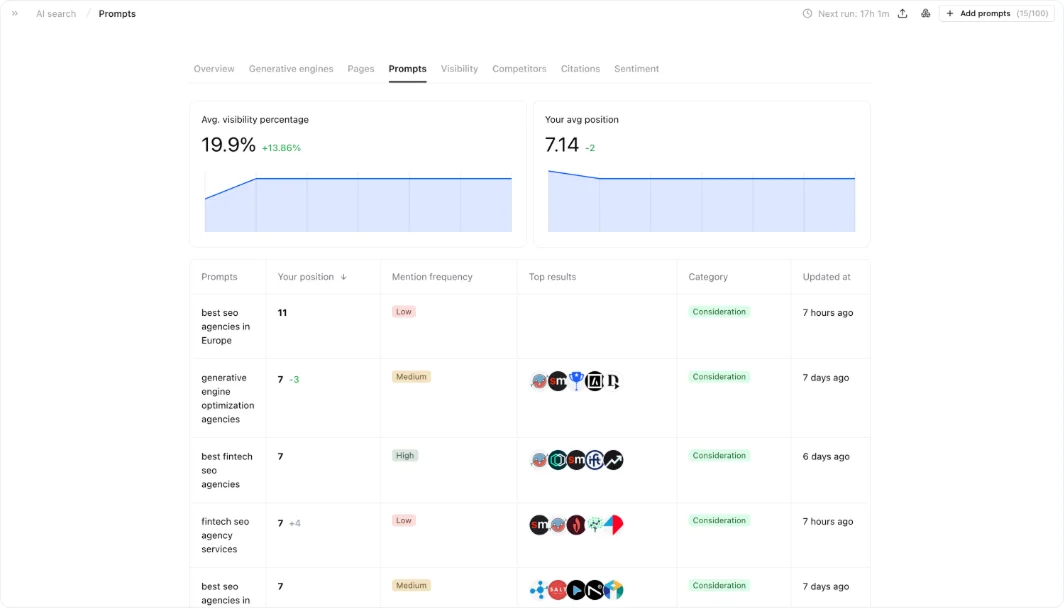

- Which competitor pages are being cited instead

- Where citation gaps exist across different intent categories

In traditional SEO, backlinks became a proxy for trust. In AI search, citations are closer to trust, but they're contextual, selective, and intent-bound. A source cited for technical explanations might never appear in purchase-decision prompts. Conflating these creates a measurement layer that looks comprehensive while actively obscuring what matters.

In the video below, you can see how to track AI search citations:

4. Page-Level Visibility, Not Domain-Level Vanity Metrics

AI engines don't retrieve domains. They retrieve documents.

This distinction changes everything about what's worth measuring. Domain Authority became a convenient shorthand in SEO because it abstracted site-wide trust into a single number. AI visibility tools inherited this pattern, offering domain-level scores that summarize brand presence across platforms. These scores feel actionable. They rarely are.

A domain score showing "strong AI visibility" might mask the fact that 90% of citations come from three pages, while hundreds of others contribute nothing. It might hide that certain content types, long-form guides, data studies, and comparison frameworks get cited repeatedly while product pages disappear from reasoning chains. Domain metrics aggregate away exactly the patterns that matter for improvement.

Page-level visibility reveals:

- Which content structures do AI systems prefer

- How freshness, depth, and clarity influence retrieval decisions

- Where internal competition exists (multiple pages targeting similar intents, weakening each other)

- Which pages need optimization versus consolidation

- Which formats should be scaled

Domain-level AI scores are the equivalent of Domain Authority in this new context: easy to quote in executive reports, hard to act on in practice. When a domain score drops, what changed? Which pages lost visibility? In which intent categories? Against which competitors? Domain metrics can't answer these questions. Page-level tracking can.

5. Correlation With Business Outcomes

AI visibility is only valuable if it changes business outcomes. Most tools make this connection impossible to see.

Perfect attribution is unrealistic; the path from AI mention to conversion involves too many variables and too much time lag. But the directional signal is not unrealistic. AI visibility tools should help teams correlate exposure with assisted conversions, brand search lift, demo requests, and pipeline acceleration.

Two case studies illustrate what outcome-focused optimization looks like at scale:

HubSpot implemented semantic optimization targeting how AI systems understand and reference their content. The result was a 642% increase in AI citations. Their blog traffic declined to just 10% of total leads, down from being the primary lead source. They positioned aggressively on AI visibility anyway, claiming to be "cited in LLMs more than any other CRM."

The strategic bet: declining traffic matters less than increasing citation frequency if citations drive brand authority that compounds over time.

Without connection to outcomes, AI visibility becomes content theater: impressive metrics, unclear impact. Founders don't fund dashboards; they fund leverage. Tools that can't help teams correlate AI visibility with pipeline or market position are measuring the wrong things.

6. Change Detection and Volatility Monitoring

AI systems change quietly. Visibility shifts happen silently.

Retrieval behavior shifts. Citation patterns evolve. Previously stable prompts stop producing the same sources. New competitors appear in reasoning chains. Old advantages erode without an obvious cause. AI visibility rarely breaks overnight; it decays gradually, then suddenly. By the time traffic signals degrade or pipeline quality drops, the underlying visibility problem has been compounding for weeks.

The challenge is that AI platforms don't announce retrieval changes the way Google announces core algorithm updates. A content format that generated consistent citations for six months might suddenly stop working. A competitor adopting a new content structure might displace established sources without any visible trigger.

Teams typically discover these changes reactively, after noticing traffic declines, brand search drops, or salespeople reporting that prospects mention competitors they haven't heard of.

What effective change detection requires:

- Historical baselines for each prompt cluster

- Anomaly identification against those baselines

- Competitive context (is the decline isolated or category-wide?)

- Engine-specific behavior change detection

- Alerts for sudden citation drops or competitor replacements

A 20% drop in citations might be catastrophic if competitors maintained stable visibility. The same decline might be unremarkable if the entire category experienced similar drops due to platform algorithm changes. Without comparative context, teams can't distinguish signal from noise.

In AI search, silence is not stability. It often decays.

7. Actionable Recommendations, Not Raw Reporting

Reporting is not the problem. Translation is.

AI visibility touches content strategy, SEO, product positioning, and technical infrastructure. Tools that surface data without guidance push cognitive load back onto already stretched teams.

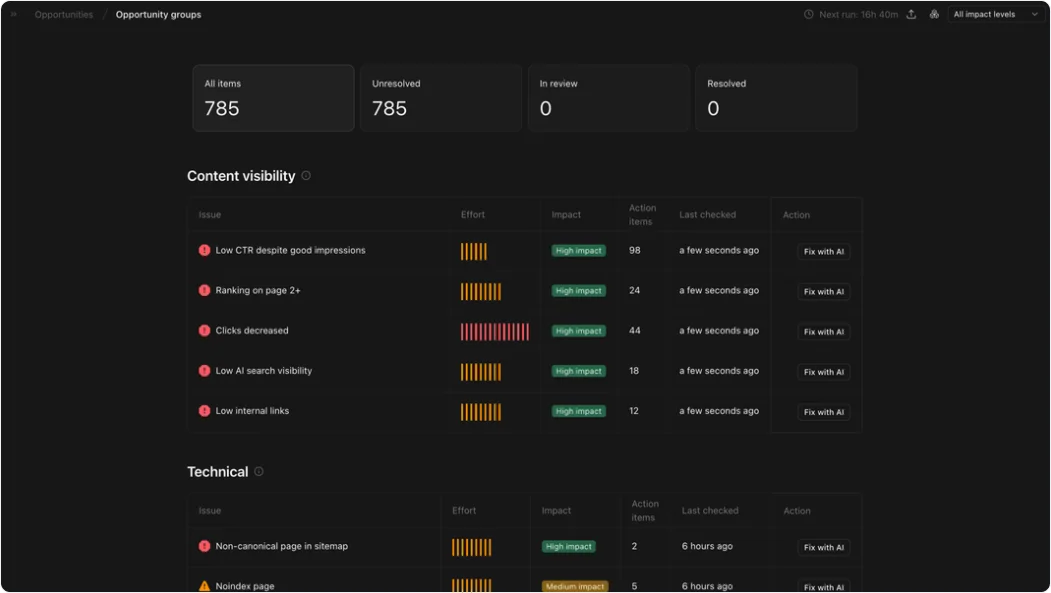

Marketing leaders don't need more dashboards showing citation counts, mention frequency, and share-of-voice percentages. They need answers to three specific questions: why visibility is low, what exactly is missing, and where action will have the highest impact.

A tool should flag when the presence is weak despite strong content production. It should surface when branded mentions are absent despite high domain authority. It should alert when the content volume is high, but structural authority signals are missing.

The correlation data also reveals where teams waste effort. Companies publishing hundreds of articles monthly see minimal visibility improvement because content volume barely moves the needle (0.194). Companies neglecting YouTube entirely miss the highest-leverage channel. Tools that can't surface these misalignments leave teams optimizing in the wrong direction, more content, less impact.

What actionable looks like: A tool reporting "citations down 15%" without explaining whether the decline came from competitive displacement, algorithm changes, or content structure issues is generating data, not insight. The best tools make the invisible visible, then make the visible actionable.

8. Historical Memory and Learning Loops

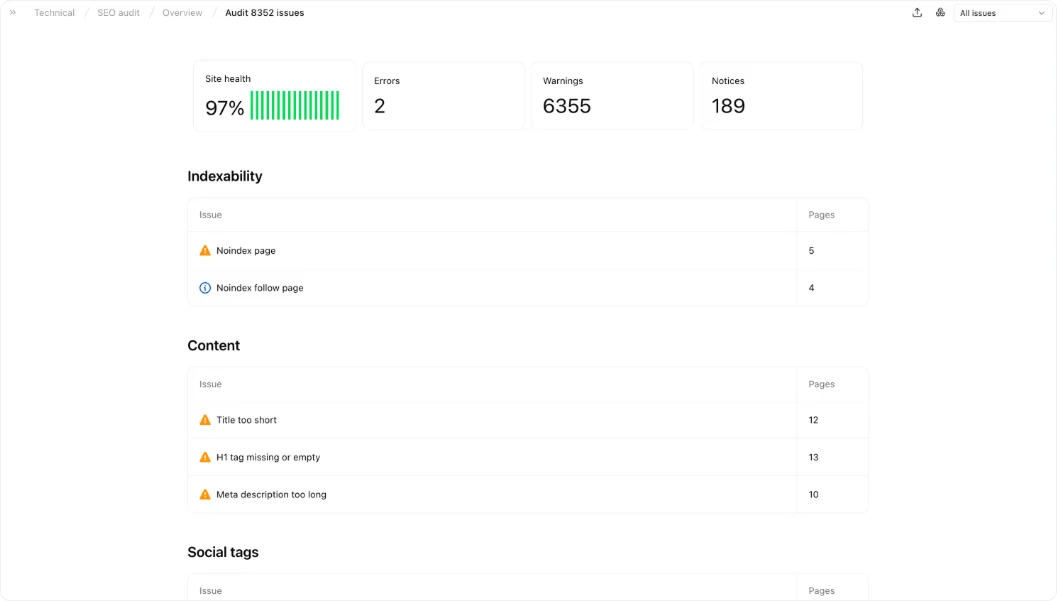

Visibility is impossible if the site isn't healthy enough to be discovered in the first place.

Most teams think about technical SEO and AI visibility as separate workstreams. They shouldn't be.

AI systems retrieve content from sites they can crawl, parse, and trust. A site with indexability errors, broken internal linking, blocked bot access, or poor content structure doesn't just underperform in Google; it becomes invisible to the retrieval pipelines that feed ChatGPT, Perplexity, and Gemini. The technical foundation is the same. The stakes are compounded.

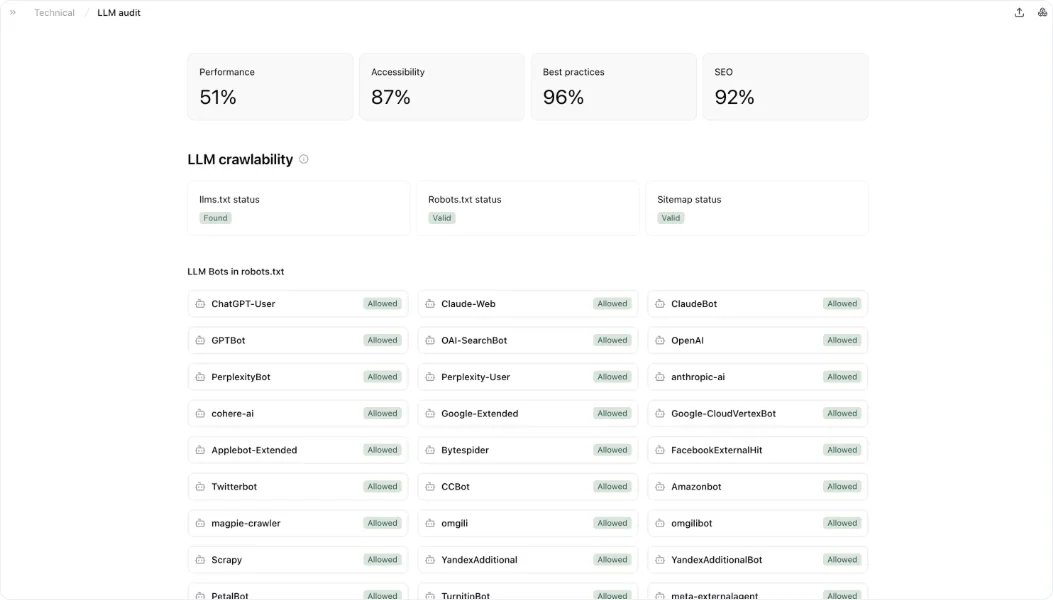

The health layer that matters for AI visibility goes beyond standard SEO audits. AI crawlers evaluate a different set of signals: is the content structured in a way that LLMs can chunk and process it?

Are trust signals present, authorship, citations, structured data, and E-E-A-T indicators that AI systems use to assess source credibility? Is the robots.txt configuration accidentally blocking AI bots while allowing Googlebot?

These are distinct failure modes that a Google-centric audit will never surface.

The gap matters because the crawl ecosystem has fragmented. Different AI systems deploy different crawlers with different purposes. A robots.txt directive that correctly manages Googlebot may simultaneously block Perplexity's crawler or prevent content from being used for AI grounding.

Teams optimizing for traditional search often create technical configurations that undermine AI visibility without realizing it, not because of deliberate choices, but because the tooling never flagged the conflict.

The Technical Foundation: What Has to Work Under the Hood

Beyond the user-facing features, there's a technical layer that determines whether an AI visibility platform can actually be trusted.

Evidence-grade response capture is non-negotiable. A tool must store raw, reviewable evidence, the full AI response, position within lists, citations shown, and metadata about when and where the response was captured. "Show me the exact answer" should be one click away from any metric. This matters especially for brand risk scenarios where legal or communications teams need to see precisely what an AI system said about product safety or compliance claims.

AI-bot crawlability diagnostics are increasingly essential. If AI agents can't crawl or interpret your site, every other optimization is wasted. The ecosystem has fragmented, and different crawler identities serve different purposes. Search inclusion, model training, and user-triggered fetches may each operate under different crawl rules and robots.txt controls. A bot control center that reads robots.txt directives, inspects logs for bot activity, and warns when controls unintentionally block inclusion is now a baseline requirement.

Multi-surface sampling architecture must account for the reality that AI features can use different models and techniques within the same platform, meaning responses vary not just by engine but by surface, geography, device type, and query phrasing. Controlling for these variables requires deterministic run windows, explicit locale and device parameterization, and transparent documentation of sampling methodology.

How to Build an AI Visibility Practice That Actually Works

Start with the measurement substrate. Without trusted data, everything else is noise. Multi-surface coverage, prompt libraries, evidence capture, and mention/citation extraction have to work reliably before anything else matters.

Add diagnostics before action. Competitive share-of-voice benchmarking, coverage gap analysis, and crawlability audits convert raw data into decisions. Don't optimize until you understand what's broken and why.

Measure the outcomes, not just the activity. Track AI referral sessions, conversion rates from AI referrals, and assisted conversions alongside citation frequency and share of voice. If visibility improvements don't eventually show up in business metrics, something is wrong with either the measurement or the strategy.

Build historical baselines immediately. The data you don't collect today can't be analyzed six months from now. Historical memory is the only reliable advantage in an unstable system.

Meet Atomic: Your Comprehensive AI Visibility Tool

Most AI visibility tools solve one piece of the puzzle. Atomic was built to cover all of it, tracking how your brand performs across AI engines, connecting that visibility to real business outcomes, and automating the optimization work that follows.

Here's how Atomic directly addresses the features that matter most:

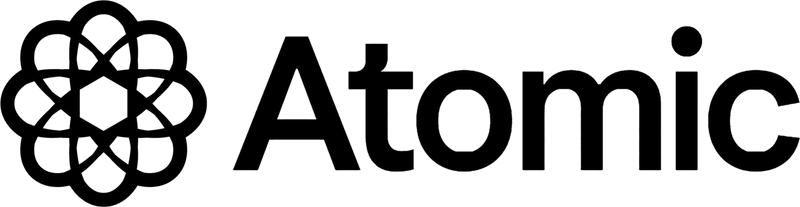

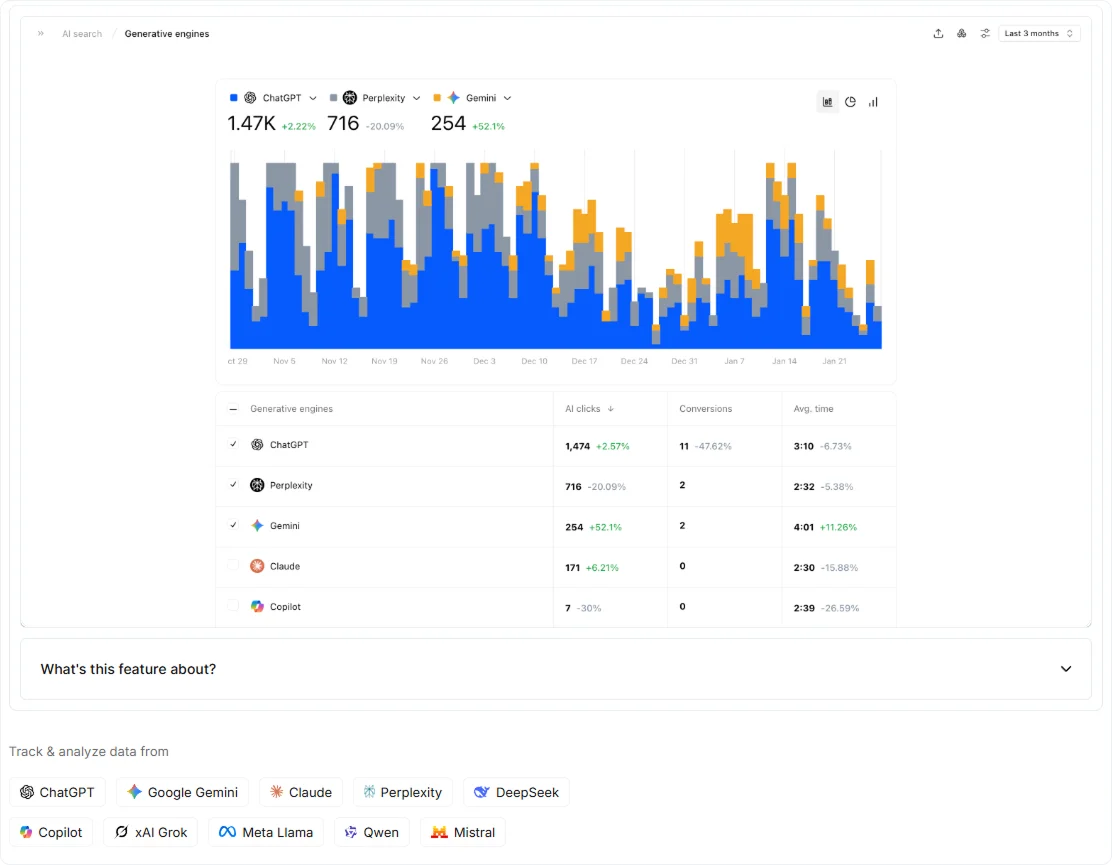

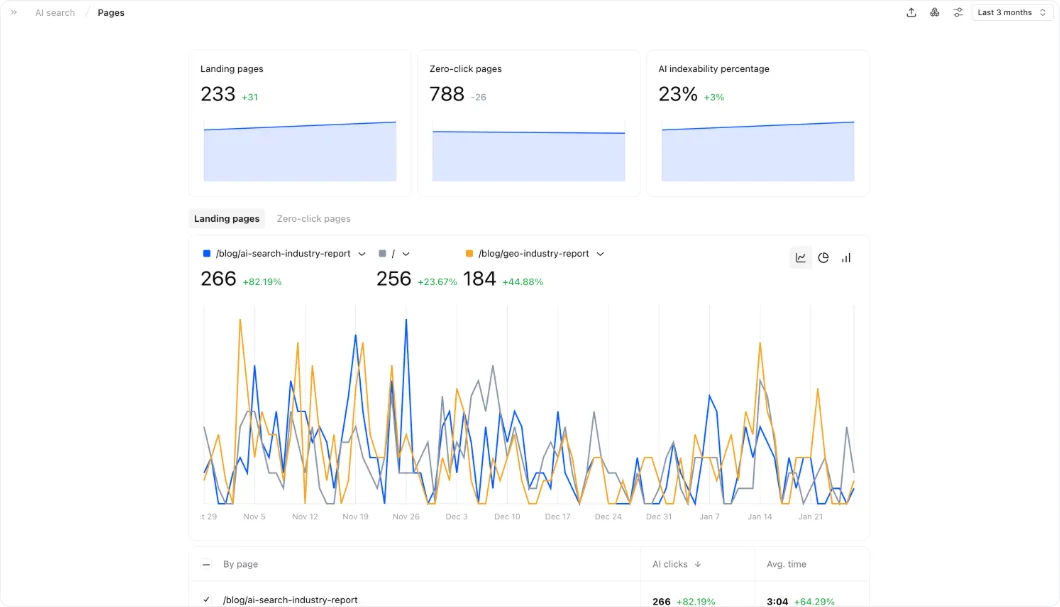

- 10-engine coverage in one dashboard: ChatGPT, Perplexity, Gemini, Claude, Copilot, DeepSeek, Grok, and more, with prompt tracking categorized by funnel stage (Awareness, Consideration, Decision) and average position per engine.

- Citation and sentiment analysis: distinguishes your citations from competitors', classifies how AI engines describe your brand, and traces sentiment back to the specific sources AI models are drawing from.

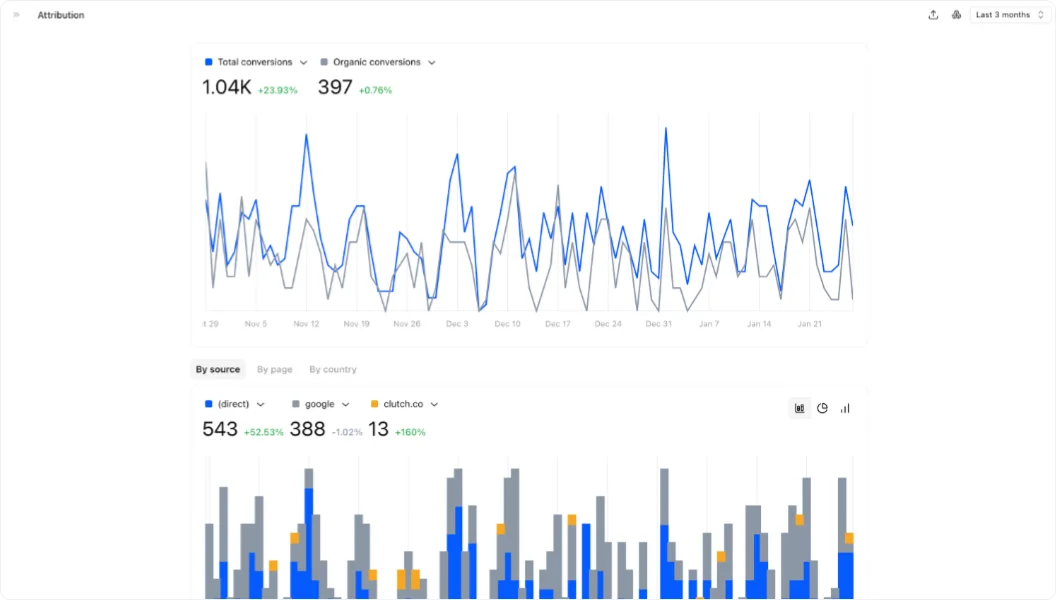

- Business outcome attribution: connects AI search visits to actual conversions with session quality broken down by source engine, so you know which platforms drive results, not just traffic.

- Technical LLM audit: dedicated crawlability diagnostics from an AI-crawler perspective, covering LLM bot permissions, content structure, trust signals, and schema markup, beyond what a standard SEO audit catches.

- Unified Google + AI analytics with AI agents: Google Search Console and GA4 alongside AI visibility data in the same reporting layer, with deployable agents that automate recurring tasks like content refreshment, keyword decay monitoring, and crawlability fixes.

The Bottom Line

The official guidance from Google is obvious: no special optimizations are required for AI features beyond strong SEO fundamentals and crawlability.

That makes AI visibility tooling less about secret hacks and more about an engineering-grade measurement system that connects technical accessibility, content comprehensiveness, and competitive reputation signals to observed inclusion in AI answers, and ultimately to measurable business outcomes.

Current AI visibility tools resemble early-days SEO software: lots of metrics, unclear causality, optimization based on correlation rather than understanding. The tools that eventually dominated SEO weren't the ones with the most data. They were the ones who helped practitioners develop better mental models of how search engines worked.

The same pattern will repeat in AI search. The tools that win won't have the most charts. They'll be the tools that help teams understand why AI systems trust certain sources, how reasoning paths form, and where visibility erodes before traffic signals degrade.

Start with Atomic, and win the AI Visibility race.

Frequently Asked Questions

Is AI visibility the same as SEO?

Not exactly, but SEO remains the foundation. If your content isn't discoverable by traditional search, it's largely invisible to AI systems too. The difference is that strong SEO rankings are necessary but no longer sufficient.

AI systems layer additional signals on top, such as content structure, source authority, quotability, and branded presence, which determine whether a well-ranking page gets cited or ignored in a generated answer. Think of SEO as the price of entry, and AI visibility work as what you do once you're in the room.

How do I know if my brand is being cited or just mentioned in AI responses?

You need a tool that separates the two explicitly. An unattributed mention means your brand appeared in a response with no link or source credit. A citation means the AI engine flagged your content as a trusted source. Most tools conflate them into a single "visibility score", which is why the number looks good while the actual influence on buyer decisions stays flat.

Do I need a separate tool for AI visibility, or will my existing SEO platform cover it?

For most teams, existing SEO platforms don't cover it adequately. Traditional tools weren't built to query AI engines, parse generative responses, track citation attribution, or audit LLM crawlability. Some major platforms have added AI visibility modules, but coverage is often limited to one or two engines. If AI-driven discovery is becoming a real channel for your business, it warrants dedicated measurement.